The Day Everything Broke: What We Learned From Our Biggest Automation Fail

The Day Everything Broke: What We Learned From Our Biggest Automation Fail

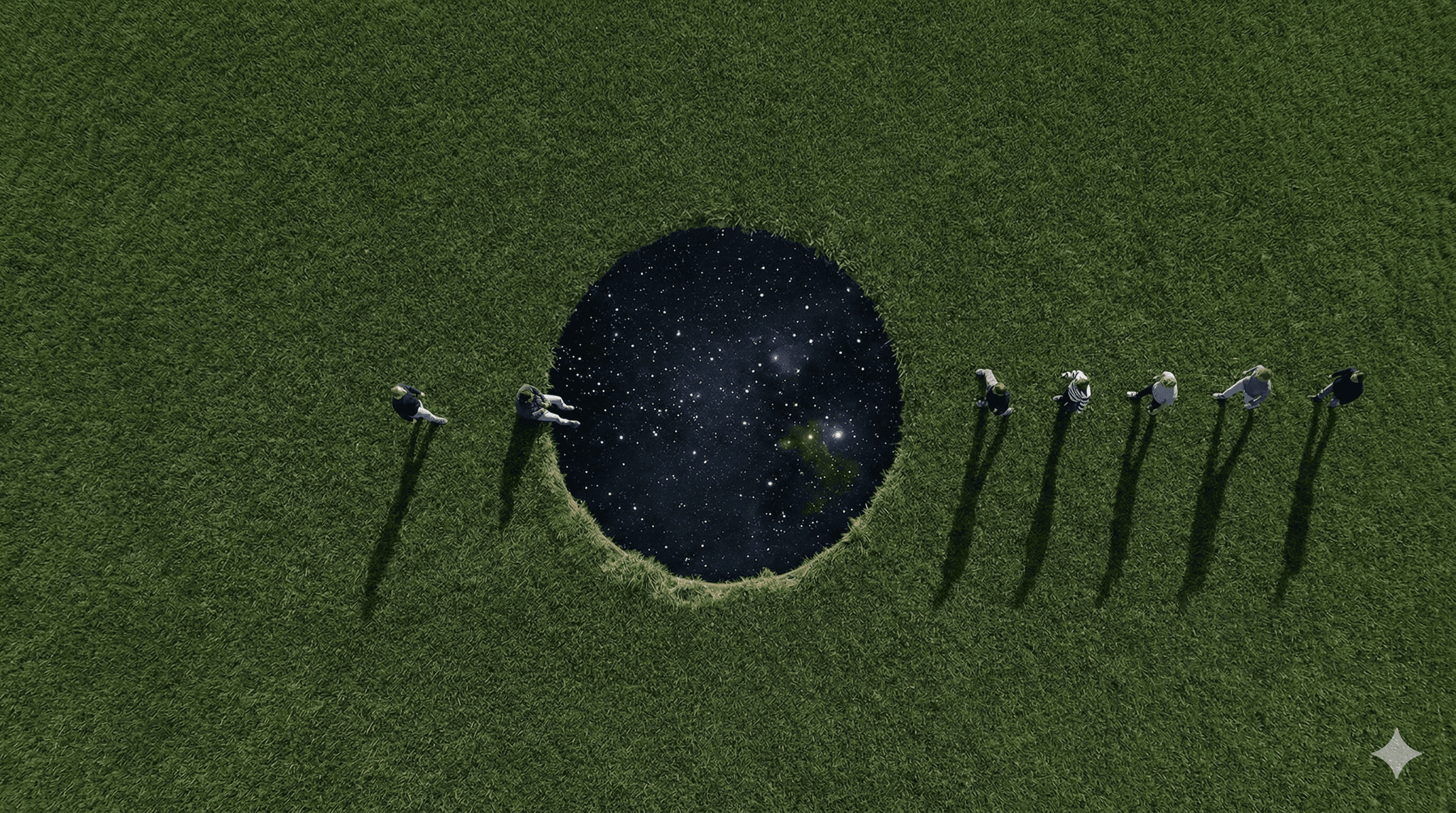

Most automation stories are success stories. "We saved 20 hours per week!" "We eliminated 80% of manual work!" "Everything works perfectly!"

But not every automation project succeeds. Some fail. Some fail spectacularly.

We've seen it happen across the industry. Projects that started with promise and ended in disaster. Systems that broke when they should have worked. Teams that learned hard lessons the difficult way.

This is one of those stories. The characters are fictional, but the mistakes are real. The patterns are common. The lessons are valuable.

Here's what can go wrong, why it happens, and how to avoid it.

The Project That Seemed Perfect

Let's call it Project Phoenix. (The name was ironic in hindsight.)

A company—let's call them TechCorp—decided to automate their entire invoice processing workflow. They were processing hundreds of invoices per week, manually entering data into their accounting system, and it was taking forever.

The goal: fully automated invoice processing. From email receipt to database entry. No human intervention needed.

It seemed doable. They had the tools. They had the budget. They had confidence.

That was their first mistake.

What They Built

They built an AI agent that would:

- Monitor their email inbox for invoices

- Extract data from PDF invoices (vendor, amount, date, line items)

- Validate the data against their vendor database

- Match invoices to purchase orders

- Enter everything into their accounting system

- Send confirmation emails

- Flag any discrepancies for review

It was ambitious. It was comprehensive. It was... too much.

The First Red Flags (That They Ignored)

Looking back, there were warning signs. They just didn't pay attention to them.

Red Flag 1: Scope Creep

What started as "automate invoice data entry" became "automate the entire invoice workflow." Every meeting, there was a new requirement. "Can it also match purchase orders?" "Can it handle international invoices?" "Can it integrate with our approval system?"

They kept saying yes. They kept adding features. They kept expanding the scope.

They should have said no. Or at least, "Let's start smaller."

Red Flag 2: Insufficient Testing

They tested it. But they tested it in a controlled environment. With clean, well-formatted invoices. With perfect data. With ideal conditions.

They didn't test it with:

- Messy, scanned PDFs

- Handwritten invoices

- Invoices in foreign languages

- Invoices with missing information

- Edge cases

They tested for the best case. They should have tested for the worst case.

Red Flag 3: No Rollback Plan

They built it. They deployed it. They turned it on.

But they didn't have a plan for what to do if it broke. No way to quickly turn it off. No way to revert to the old process. No safety net.

They assumed it would work. That was naive.

The Day Everything Broke

It was a Tuesday. The system had been running for about a week. Things seemed fine. A few minor issues, but nothing major.

Then it happened.

The Cascade Failure

An invoice came in with an unusual format. The system couldn't parse it. Instead of flagging it for review, it tried to process it anyway. It made up data. Entered garbage into the accounting system.

But that wasn't the worst part.

The garbage data triggered a validation error. The system tried to fix it. Made it worse. Tried again. Made it worse again. Created a loop.

Within an hour, hundreds of invoices had been processed incorrectly. Data was corrupted. The accounting system was a mess.

The Panic

The accounting team was panicking. Numbers didn't match. Reports were wrong. They couldn't trust their data.

They had to shut everything down. Immediately. Manually. There was no kill switch.

It took them 4 hours to stop the system. Another 8 hours to assess the damage. Days to fix what was broken.

What Went Wrong (The Honest Post-Mortem)

This pattern—or variations of it—plays out across the industry. Here's what typically goes wrong:

Mistake 1: Over-Engineering

They tried to automate everything at once. The entire workflow. Every edge case. Every possible scenario.

They should have started smaller. Automate one step. Get it working perfectly. Then add the next step.

Mistake 2: Insufficient Testing

They tested in ideal conditions. They didn't test with real-world messiness. They didn't test edge cases. They didn't test failure scenarios.

They should have tested with the worst invoices. The messiest ones. The ones that would break the system.

Mistake 3: No Safety Mechanisms

They didn't have:

- A kill switch

- A rollback plan

- Human oversight

- Error limits

- Validation checkpoints

They trusted the system too much. They should have trusted it less.

Mistake 4: Ignoring Warning Signs

There were small failures before the big one. Invoices that didn't process correctly. Data that looked wrong. Errors that they brushed off as "minor."

They should have treated every error as a potential disaster. They should have investigated. They should have fixed things before they became problems.

Mistake 5: Poor Communication

They built the system. They deployed it. They assumed it was working.

But they didn't check in regularly. They didn't monitor it closely. They didn't communicate with stakeholders about what was happening.

They should have been more involved. More present. More communicative.

What We've Learned (From Watching This Pattern)

We've seen variations of this story play out across different companies and industries. Here's what we've learned:

Lesson 1: Start Small

Don't try to automate everything at once. Start with one thing. Get it perfect. Then expand.

Small wins build confidence. Big failures destroy it.

Lesson 2: Test for Failure

Don't test for the best case. Test for the worst case. Test with messy data. Test with edge cases. Test with things that will break.

If it can break, it will break. Better to find out in testing than in production.

Lesson 3: Build in Safety

Every automation needs:

- A way to turn it off quickly

- A way to roll back

- Human oversight

- Error limits

- Validation checkpoints

Trust, but verify. And have a backup plan.

Lesson 4: Monitor Everything

Don't deploy and forget. Monitor. Watch. Check in regularly.

Small problems become big problems if you ignore them. Catch issues early. Fix them before they escalate.

Lesson 5: Communicate Constantly

Keep stakeholders informed. Tell them what's working. Tell them what's not. Tell them what you're fixing.

Transparency builds trust. Silence builds suspicion.

How to Avoid This (The Right Way)

Based on what we've seen work, here's the approach that prevents these failures:

Phase 1: Document the Manual Process First

Don't automate anything yet. Document the manual process. Every step. Every decision point. Every edge case.

You need to understand the process perfectly before you try to automate it.

Phase 2: Automate One Step

Start with just one step. Extract data from invoices. That's it. No validation. No database entry. Just extraction.

Test it thoroughly. With messy invoices. With edge cases. With everything that could go wrong.

When it works perfectly, move to the next step.

Phase 3: Add Steps Gradually

One step at a time. Test thoroughly. Get it perfect. Then add the next step.

Slow and steady. Boring, but safe.

Phase 4: Build in Safety

Every step should have:

- Error handling

- Validation

- Human review points

- A kill switch

- Monitoring

Trust the system, but verify everything.

Phase 5: Monitor and Iterate

Watch it closely. Check in daily. Fix issues immediately. Improve continuously.

It's not "set it and forget it." It's "set it and watch it carefully."

What This Means for You

If you're building automations, here's what to take away:

Don't Try to Do Everything at Once

Start small. Get one thing working perfectly. Then expand.

Test Thoroughly

Test with messy data. Test edge cases. Test failure scenarios. If it can break, test it breaking.

Build in Safety

Have a kill switch. Have a rollback plan. Have human oversight. Have error limits.

Monitor Closely

Don't deploy and forget. Watch it. Check it. Fix issues early.

Communicate Constantly

Keep stakeholders informed. Be transparent about what's working and what's not.

The Silver Lining

Here's the thing: failures teach more than successes.

When companies learn from these mistakes, they build:

- Safer automations

- Better testing processes

- Stronger communication

- More robust recovery plans

- Systems that actually work

The companies that succeed aren't the ones that never fail. They're the ones that learn from failures—their own or others'.

Conclusion

Not every automation project succeeds. Some fail. Some fail spectacularly.

But failure isn't the end. It's a lesson. And if you learn from it—whether it's your failure or someone else's—you'll build better systems. Safer systems. Systems that actually work.

The key is to be aware of what can go wrong. To learn from mistakes. To build differently.

The story we told here? It's fictional. But the mistakes are real. The patterns are common. The lessons are valuable.

And that's what matters.

Want to avoid making these mistakes? Start small. Test thoroughly. Build in safety. Monitor closely. And remember: every failure is a lesson, if you're willing to learn from it.